Instructions to use nixiesearch/nixie-querygen-v2 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use nixiesearch/nixie-querygen-v2 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="nixiesearch/nixie-querygen-v2")# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("nixiesearch/nixie-querygen-v2") model = AutoModelForCausalLM.from_pretrained("nixiesearch/nixie-querygen-v2") - llama-cpp-python

How to use nixiesearch/nixie-querygen-v2 with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="nixiesearch/nixie-querygen-v2", filename="ggml-model-f16.gguf", )

output = llm( "Once upon a time,", max_tokens=512, echo=True ) print(output)

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use nixiesearch/nixie-querygen-v2 with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf nixiesearch/nixie-querygen-v2:F16 # Run inference directly in the terminal: llama-cli -hf nixiesearch/nixie-querygen-v2:F16

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf nixiesearch/nixie-querygen-v2:F16 # Run inference directly in the terminal: llama-cli -hf nixiesearch/nixie-querygen-v2:F16

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf nixiesearch/nixie-querygen-v2:F16 # Run inference directly in the terminal: ./llama-cli -hf nixiesearch/nixie-querygen-v2:F16

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf nixiesearch/nixie-querygen-v2:F16 # Run inference directly in the terminal: ./build/bin/llama-cli -hf nixiesearch/nixie-querygen-v2:F16

Use Docker

docker model run hf.co/nixiesearch/nixie-querygen-v2:F16

- LM Studio

- Jan

- vLLM

How to use nixiesearch/nixie-querygen-v2 with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "nixiesearch/nixie-querygen-v2" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "nixiesearch/nixie-querygen-v2", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker

docker model run hf.co/nixiesearch/nixie-querygen-v2:F16

- SGLang

How to use nixiesearch/nixie-querygen-v2 with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "nixiesearch/nixie-querygen-v2" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "nixiesearch/nixie-querygen-v2", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "nixiesearch/nixie-querygen-v2" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "nixiesearch/nixie-querygen-v2", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }' - Ollama

How to use nixiesearch/nixie-querygen-v2 with Ollama:

ollama run hf.co/nixiesearch/nixie-querygen-v2:F16

- Unsloth Studio new

How to use nixiesearch/nixie-querygen-v2 with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for nixiesearch/nixie-querygen-v2 to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for nixiesearch/nixie-querygen-v2 to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for nixiesearch/nixie-querygen-v2 to start chatting

- Docker Model Runner

How to use nixiesearch/nixie-querygen-v2 with Docker Model Runner:

docker model run hf.co/nixiesearch/nixie-querygen-v2:F16

- Lemonade

How to use nixiesearch/nixie-querygen-v2 with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull nixiesearch/nixie-querygen-v2:F16

Run and chat with the model

lemonade run user.nixie-querygen-v2-F16

List all available models

lemonade list

nixie-querygen-v2

A Mistral-7B-v0.1 fine-tuned on query generation task. Main use cases:

- synthetic query generation for downstream embedding fine-tuning tasks - when you have only documents and no queries/labels. Such task can be done with the nixietune toolkit, see the

nixietune.qgen.generaterecipe. - synthetic dataset expansion for further embedding training - when you DO have query-document pairs, but only a few. You can fine-tune the

nixie-querygen-v2on existing pairs, and then expand your document corpus with synthetic queries (which are still based on your few real ones). Seenixietune.qgen.trainrecipe.

The idea behind the approach is taken from the doqT5query model. See the original paper Rodrigo Nogueira and Jimmy Lin. From doc2query to docTTTTTquery.

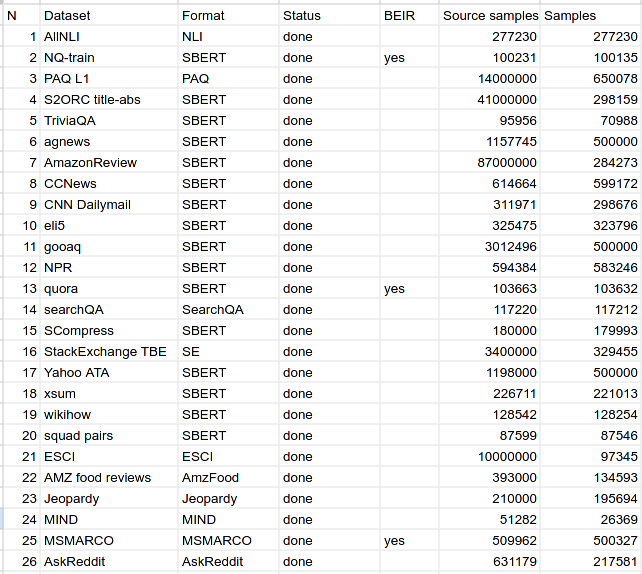

Training data

We used 200k query-document pairs sampled randomly from a diverse set of IR datasets:

Flavours

This repo has multiple versions of the model:

- model-*.safetensors: Pytorch FP16 checkpoint, suitable for down-stream fine-tuning

- ggml-model-f16.gguf: GGUF F16 non-quantized llama-cpp checkpoint, for CPU inference

- ggml-model-q4.gguf: GGUF Q4_0 quantized llama-cpp checkpoint, for fast (and less precise) CPU inference.

Prompt formats

The model accepts the followinng prompt format:

<document next> [short|medium|long]? [question|regular]? query:

Some notes on format:

[short|medium|long]and[question|regular]fragments are optional and can be skipped.- the prompt suffix

query:has no trailing space, be careful.

Inference example

With llama-cpp and Q4 model the inference can be done on a CPU:

$ ./main -m ~/models/nixie-querygen-v2/ggml-model-q4.gguf -p "git lfs track will \

begin tracking a new file or an existing file that is already checked in to your \

repository. When you run git lfs track and then commit that change, it will \

update the file, replacing it with the LFS pointer contents. short regular query:" -s 1

sampling:

repeat_last_n = 64, repeat_penalty = 1.100, frequency_penalty = 0.000, presence_penalty = 0.000

top_k = 40, tfs_z = 1.000, top_p = 0.950, min_p = 0.050, typical_p = 1.000, temp = 0.800

mirostat = 0, mirostat_lr = 0.100, mirostat_ent = 5.000

sampling order:

CFG -> Penalties -> top_k -> tfs_z -> typical_p -> top_p -> min_p -> temp

generate: n_ctx = 512, n_batch = 512, n_predict = -1, n_keep = 0

git lfs track will begin tracking a new file or an existing file that is

already checked in to your repository. When you run git lfs track and then

commit that change, it will update the file, replacing it with the LFS

pointer contents. short regular query: git-lfs track [end of text]

Training config

The model is trained with the follwing nixietune config:

{

"train_dataset": "/home/shutty/data/nixiesearch-datasets/query-doc/data/train",

"eval_dataset": "/home/shutty/data/nixiesearch-datasets/query-doc/data/test",

"seq_len": 512,

"model_name_or_path": "mistralai/Mistral-7B-v0.1",

"output_dir": "mistral-qgen",

"num_train_epochs": 1,

"seed": 33,

"per_device_train_batch_size": 6,

"per_device_eval_batch_size": 2,

"bf16": true,

"logging_dir": "logs",

"gradient_checkpointing": true,

"gradient_accumulation_steps": 1,

"dataloader_num_workers": 14,

"eval_steps": 0.03,

"logging_steps": 0.03,

"evaluation_strategy": "steps",

"torch_compile": false,

"report_to": [],

"save_strategy": "epoch",

"streaming": false,

"do_eval": true,

"label_names": [

"labels"

]

}

License

Apache 2.0

- Downloads last month

- 144